Multi-cloud environments with Google Anthos

Multi-cloud environments with Google Anthos

21 December 2020

Tiboo Augustijnen

Anthos is a managed application platform for enterprises that want faster modernization and greater consistency in a hybrid and multi-cloud world. It provides a consistent experience in your daily development and operational tasks across different environments. One of the current problems with all different cloud providers is “vendor lock-in”, which Anthos tries to solve using open standards and a unified model for computing, resulting in service management and policy-driven consistency across clouds managed through a centralized control plane.

Key features

In order to provide all the above, Anthos has come up with a bunch of features. These features will make sure that your application will contain:

- Kubernetes based Container Orchestration and Management

Run Kubernetes Clusters anywhere you like. Anthos will deliver a consistent, simple and validated way of installing and upgrading your clusters.

- Policy and security automation

Your company can define, automate and enforce policies across multiple platforms to be compliant with the security requirements. Anthos Config Management detects changes in one of your Kubernetes Clusters and enforces it to the other clusters.

- Istio based Service mesh with built-in visibility Management

Anthos Service Mesh manages and secures the traffic in your cluster while monitoring your applications.

- Containerize your existing workloads

Anthos can help to convert your team’s existing applications running in VMs automatically to containers. Migration to Anthos will make the process easy and modernise your workloads.

- Day 2 operations adoption

Reduce costs on maintaining, upgrading and patching virtualized infrastructure by using modern CI/CD pipelines, Infrastructure-as-Code, desired-state configuration and image-based application deployment.

- Serverless in your clusters

Cloud Run for Anthos provides custom machine types, VPC networking, and integration with existing Kubernetes‐based solutions. Cloud Run is a flexible serverless development platform on Google Kubernetes Engine (GKE). All this is possible by Knative, an open-source project that supports serverless workloads on Kubernetes.

Use Case

In the Anthos article, there are 3 use cases on why you should use Anthos in your company. The first use case explains how you can use Anthos to modernize your Java application. There are 3 stages:

In Anthos at the edge, you will run your applications closer to your end-users. The use case that interests us the most is “modern CI/CD with Anthos”, as we are working with pipelines in Azure DevOps, Jenkins or any other CI/CD tool most of the time. We will take a closer look at the use case for the details and benefits Anthos provides for a DevOps engineer.

- Something that is always welcome is specialised and direct guidance. Experienced Kubernetes and Cloud engineers will help you with your pipelines, policy management and application configuration.

- A lot of people know the feeling of choosing software that was expensive and immobile because it was at that time the best choice. Google is using open source software to make the experience vendor-neutral and portable.

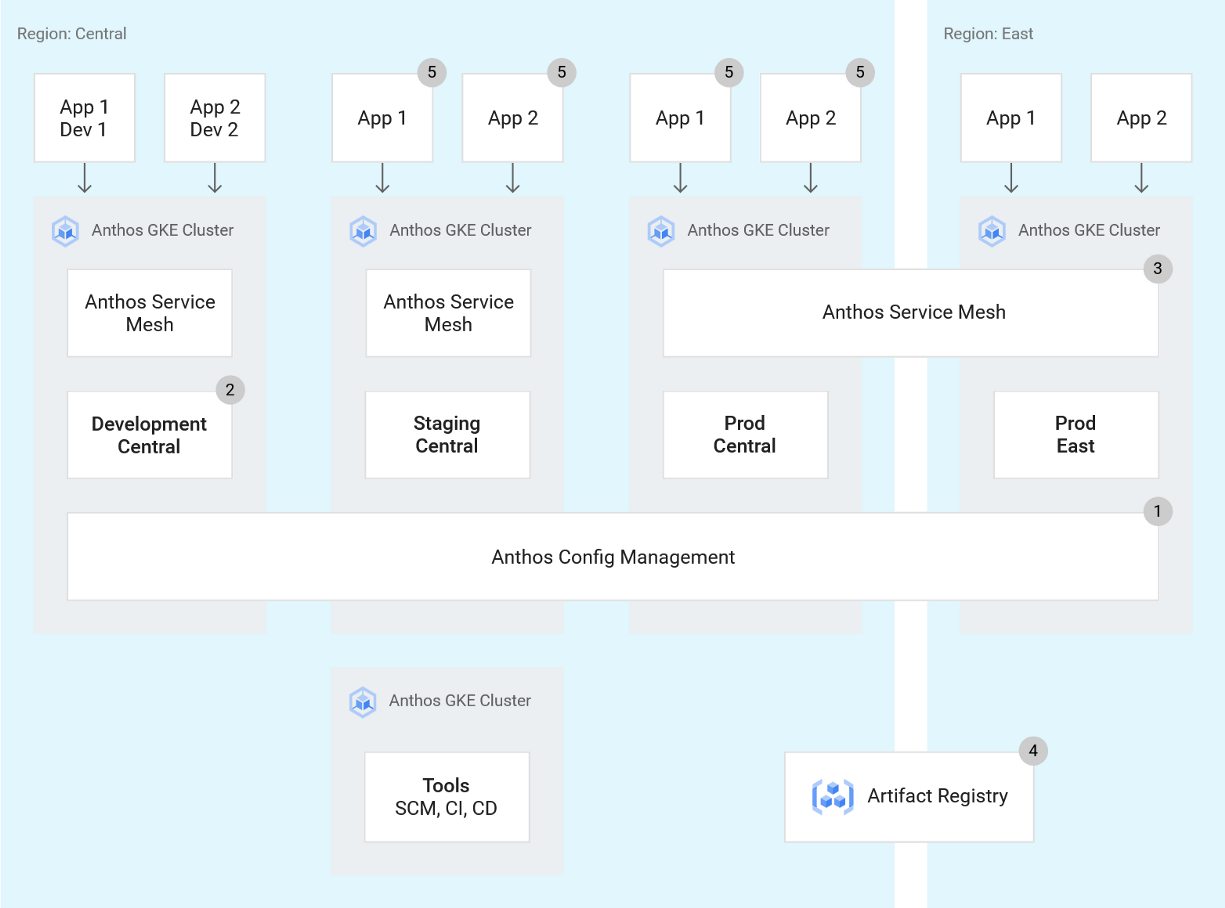

Google provides a plan on how the CI/CD pipeline works. First of all, Anthos Config Management (or ACM) ensures that every cluster is in the same state and that all the deployments are compliant with the company’s policies. There is a development cluster for the developers to work on their applications with Skaffold. The Anthos Service Mesh spans over 2 clusters, which are located in separate regions and allow service discovery and resilience. There is of course your Artifact Registry for all your container images. Those images are checked by a tool called Binary Authorization. Binary Authorization is a deploy-time security control that ensures only trusted container images are deployed on Google Kubernetes Engine (GKE). The application you would like to deploy will be deployed consistently and easily on all environments in every stage of its life-cycle.

Anthos Requirements

To start with Google Anthos, you have to create a new project with no existing resources. On your project, billing has to be enabled via an Anthos subscription or a pay-as-you-go option. The Anthos API has to be enabled to have access to all the Anthos features. By using Connect to add GKE Clusters to your environment, Anthos knows which clusters are registered and charged. Environ is a Google Cloud concept to organise multiple internal and external clusters that are part of Anthos.

Pricing

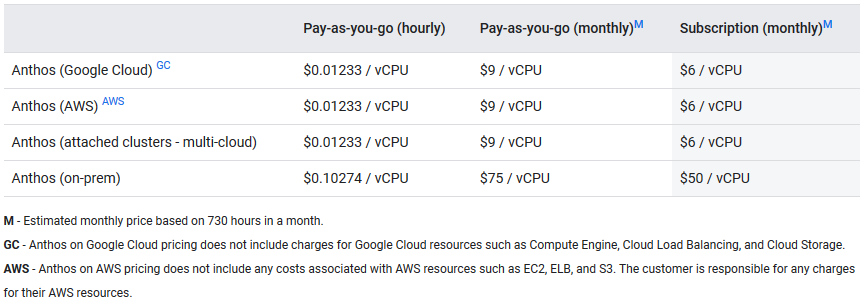

This service does not come free and it applies to all managed Anthos clusters, meaning cloud and on-prem. The cost is hourly based on the amount of Anthos vCPUs that are managed by the Anthos control plane. These vCPUs are seen as schedulable compute power in the user cluster excluding those in the admin cluster and the master node. Like most pricing sheets, if you commit for a longer period of time, you will get a discount. As you can see, the price for an Anthos cluster on-prem is a lot higher than an Anthos in the cloud. The cost is lower for GCP and AWS because the resources (Compute Engine, EC2, ELB, …) used in your cluster are not taken into account.

Our Questions

With this new product on the market, we at FlowFactor saw opportunities and were immediately interested but we still had a few questions.

- Can you only run Google Cloud workloads?

Migrate for Anthos converts VM-based workloads from either on-premise VMware, AWS, Azure or Compute Engine to Google Kubernetes Engine or Anthos. This means you can still run your Non-Google workloads after you migrated them to GKE or Anthos. - Is it only container-based or is it possible to run VMs?

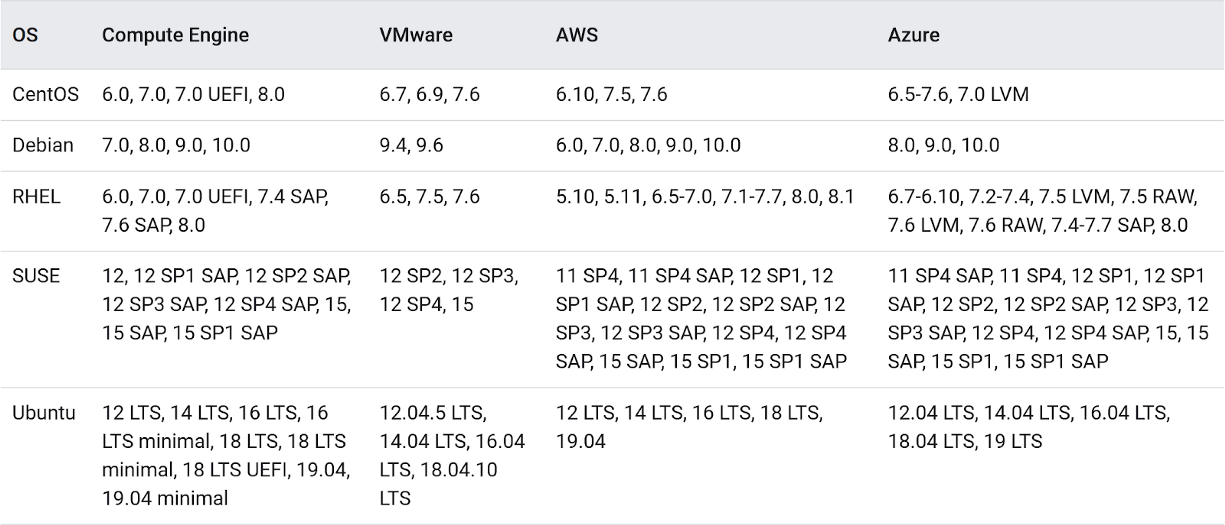

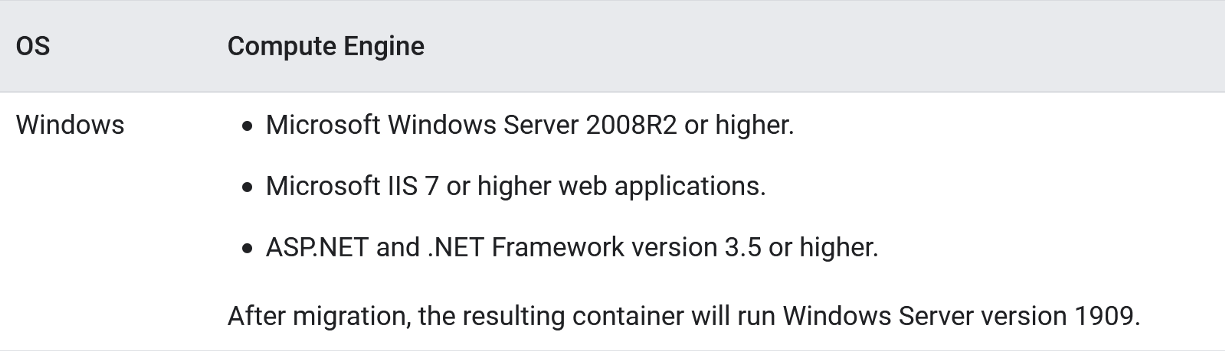

It is not possible to run VMs because it is all about running containers on their Anthos Clusters which are essentially managed Kubernetes deployments. Like the previous answer, you need to use their “Migrate for Anthos” if you want your VMs running on their clusters. Here is a list of compatible VM operating systems for Linux and Windows:

- Workloads that run on-premise, can they scale out to Anthos Clusters on GCP or AWS?

Anthos features Vertical, Horizontal and Cluster scaling but it does not mention on-prem workload expansion to the cloud. I would think that it is possible but definitely in the future. - Are there any training courses or certificates?

You can start a few hands-on labs, quests and one course on Anthos and GKE. The course “Architecting Hybrid Cloud Infrastructure with Anthos” has lectures and hands-on labs on Kubernetes Engine (GKE), GKE Connect, Istio service mesh, and Anthos Config Management capabilities for you to modernize, manage, and observe applications using Kubernetes. There are 2 prerequisites before you can start this course and those are “Google Cloud Platform Fundamentals: Core Infrastructure or have equivalent experience” and “Architecting with GKE or have equivalent experience”. Looks like some interesting study material for Anthos and GKE which can be found here.

Final Thoughts

Anthos could be really promising in the future and we will monitor the progress of Google Anthos closely. Our clients are sometimes afraid of vendor lock-in and all the problems that come with it, so having Anthos is a great solution even though only 2 cloud providers (GCP & AWS) are supported at the moment. The possibility to manage your on-prem clusters is also a nifty and pleasant feature because most companies already have some on-prem infrastructure that could be used in Anthos. Azure Stacks, AWS Outposts are alternatives but they can’t deploy to clusters on other cloud services. They also offer databases, IoT and data analytics aside from container clusters. IBM MultiCloud Manager is the most comparable alternative. The IBM Cloud Pak for Multicloud Management offering is an application-centric, AI-driven management platform designed to provide full visibility and control on GKE, IBM Kubernetes Service, AKS, EKS, OCP on AWS and on-premise. Feel free to comment down below on Azure Stacks, AWS Outposts and IBM MCM, I’m eager to hear about it.

Sorry, the comment form is closed at this time.